Tiiny AI has confirmed that its Pocket Lab AI mini PC will launch on crowdfunding at an early price of $1,399. The ultra-compact system is built for local large language model inference, giving developers and enterprises an alternative to cloud AI platforms such as OpenAI. The system is designed to run AI models locally rather than relying on recurring cloud API usage.

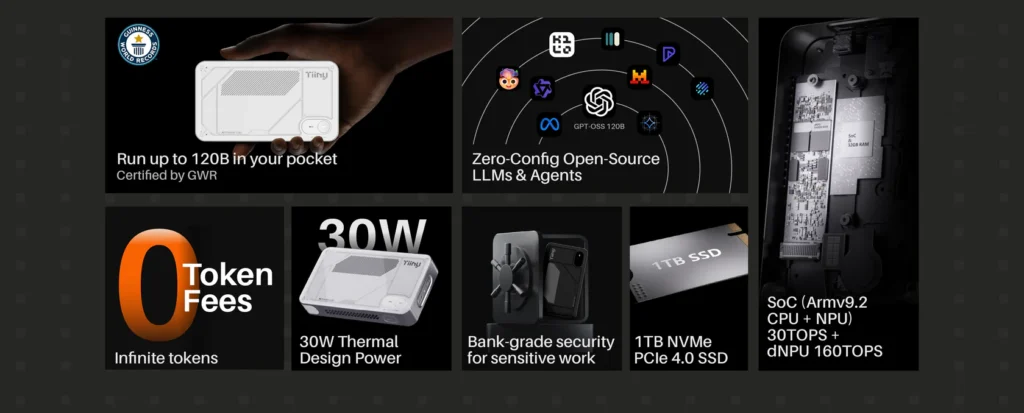

The Pocket Lab is powered by a 12-core Arm processor and a 160 TOPS discrete neural processing unit, along with an additional 30 TOPS NPU integrated into the SoC. The system includes 80GB of LPDDR5X memory and a 1TB PCIe 4.0 NVMe SSD, all operating within a 30W thermal design envelope. The company is clearly targeting high-density AI inference rather than traditional desktop computing or gaming workloads.

Unlike performance-oriented mini PCs such as the NZXT H2 with RTX 5080 graphics or systems powered by Ryzen AI 9 HX 370 and Intel Raptor Lake HX processors, the Pocket Lab prioritizes neural processing performance over GPU-based gaming or general desktop tasks.

The device measures approximately 142 × 80 × 22 mm and weighs about 0.66 lbs (300 grams). That makes it closer in size to a power bank than a typical desktop PC. Despite the compact design, the memory capacity stands out. Most consumer laptops ship with far less RAM, which limits the size of language models they can run locally.

Tiiny AI states that the Pocket Lab can handle models up to 120 billion parameters. At full precision, this would exceed practical hardware limits. However, with 4-bit quantization, memory requirements drop significantly. That may make 120B-class quantized models feasible within an 80GB memory configuration.

According to the company, the system can deliver around 21 tokens per second on GPT-OSS 120B-class configurations, with sub-second first-token latency under specific conditions. Actual performance will depend on factors such as sustained thermals, memory bandwidth, and software optimization during longer workloads.

When it comes to AI performance, the 160 TOPS discrete NPU is the number that stands out. Most consumer chips, including those from companies like Apple, are built to handle lighter AI features such as photo enhancements or background system tasks. They are not designed to continuously run large language models. The Pocket Lab, on the other hand, is clearly built with one goal in mind: running LLM workloads directly on the device.

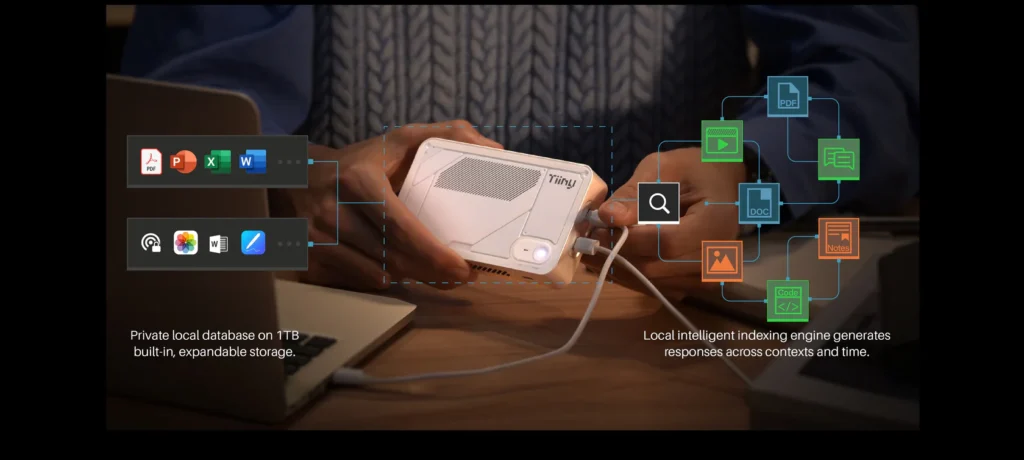

Memory capacity may ultimately be more important than peak TOPS numbers. Large language models are often limited by available RAM rather than compute alone. With 80GB of high-speed LPDDR5X memory running at 6400 MT per second, the Pocket Lab pushes beyond the limits of most compact systems. This positions it as a private, on-device AI workstation for developers, startups, and enterprises that want predictable costs and greater data control.

The broader mini PC market in 2026 has largely focused on compact gaming systems and productivity machines, including models such as the GMKtec G3 Pro, Thunderobot Mix Pro II, Beelink SER10 Max, and Chuwi AuBox X1. Those systems emphasize CPU and GPU performance, while Tiiny AI’s Pocket Lab is built specifically for sustained AI inference workloads.

Tiiny AI highlights hardware-level AES-256 full-disk encryption and user-controlled keys stored in a secure enclave. All inference runs fully on the device, which may appeal to organizations handling sensitive data or operating under regulatory requirements in the United States.

The company promotes an SDK, workflow agent APIs, model management tools, and OpenAI-compatible endpoints. If API compatibility works as intended, developers may be able to shift certain cloud-based AI workloads to local inference with limited code changes. The platform will also receive over-the-air updates, suggesting continued software development beyond the initial hardware release.

As with most crowdfunding hardware projects, real-world validation will be important. Running large quantized models inside a 30W power envelope raises questions about sustained thermal performance under heavy inference loads. Independent testing will ultimately determine how stable token generation speeds remain during extended sessions.

If Tiiny AI delivers on its stated specifications, the Pocket Lab could mark a meaningful step toward portable, self-contained AI systems capable of running advanced large language models without relying on external servers. Whether it becomes a niche developer tool or part of a broader shift toward decentralized AI infrastructure will depend on execution, performance validation, and enterprise adoption over time.

Key Specifications

| CPU | 12-core Armv9.2 processor |

| Discrete NPU | 160 TOPS INT8 |

| Integrated NPU | 30 TOPS |

| Memory | 80GB LPDDR5X 6400 MT per second |

| Storage | 1TB PCIe 4.0 NVMe SSD |

| Thermal Design Power | 30W |

| Dimensions | 142 × 80 × 22 mm |

| Weight | Approximately 300 grams |

| Connectivity | Wi-Fi 6, Bluetooth 5.3 |

| Interfaces | 3 USB-C ports |

| OS Compatibility | macOS and Windows |

Source: Tiiny AI