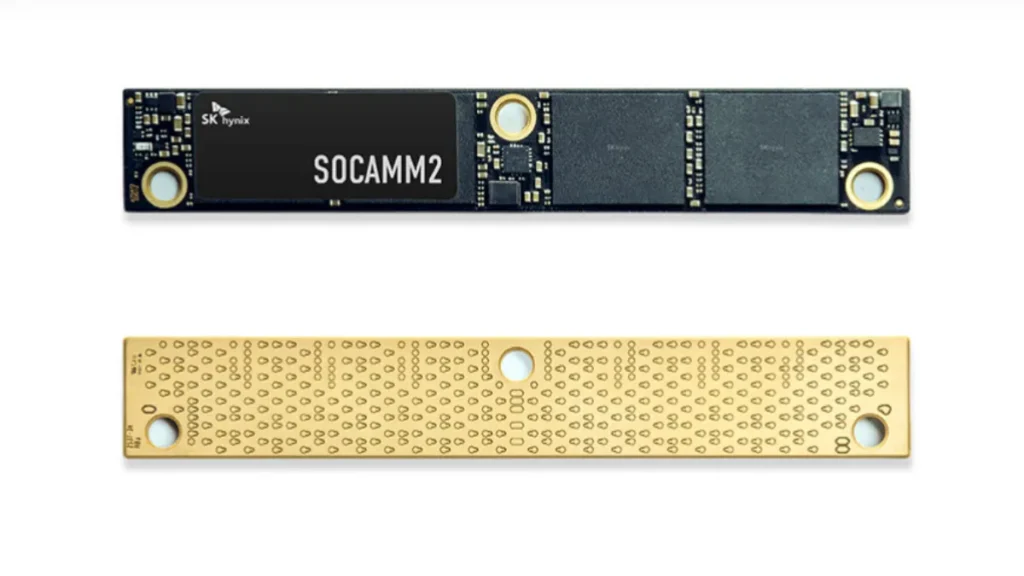

SK hynix has started mass production of its 192GB SOCAMM2 memory module, introducing a new approach to solving one of the biggest challenges in modern AI infrastructure: memory bandwidth. Built on advanced 1cnm LPDDR5X DRAM, the module is designed to support large-scale AI workloads, including training and inference for large language models, using next-generation memory standards.

As AI models get bigger and more complex, memory performance is starting to matter just as much as raw compute power. Moving data efficiently within servers has become a big deal, and if memory bandwidth falls short, it can directly limit GPU performance.

SOCAMM2 handles memory a bit differently compared to traditional RDIMM setups, introducing a new modular memory approach. It uses a more compact, modular design that helps pack in higher density while also improving signal quality and scalability. That kind of setup fits well in AI servers, where space, power, and performance all need to be balanced carefully.

One big change here is the move to LPDDR5X memory in a server setup. This type of memory is usually found in mobile devices because it uses less power, but SK hynix has adapted it for data center use.

The idea is to bring better efficiency without sacrificing performance. The company says the module can deliver more than double the bandwidth and improve power efficiency by over 75 percent compared to traditional RDIMM solutions.

Large language models need constant access to huge amounts of data, and when memory bandwidth isn’t enough, GPUs can’t run at full speed. That ends up slowing everything down. By boosting throughput and cutting power usage, SOCAMM2 helps keep AI systems running more efficiently, especially in large clusters.

The module has also been developed with next-generation platforms such as NVIDIA’s upcoming Vera Rubin architecture in mind, reflecting broader AI hardware development trends. This reflects a broader industry trend where memory, compute, and interconnect technologies are being designed together to maximize performance.

There’s also a bigger shift happening toward training-heavy AI workloads. Training needs much more bandwidth and memory capacity than inference, which puts extra strain on server hardware. SOCAMM2 is built to handle that load while keeping power usage in check, something that really matters in large-scale data centers.

Looking at the bigger picture, this points to a shift in how server memory is evolving. Traditional DIMM setups aren’t going away, but they’re starting to be complemented by newer formats like CAMM and SOCAMM, along with LPDDR-based memory making its way into enterprise systems, showing how memory design is evolving. It’s a sign that future AI hardware will lean more on specialized memory designed for high-performance workloads.

With mass production already underway, SK hynix is getting in early on a rapidly growing part of the AI hardware market. As spending on AI infrastructure continues to rise worldwide, advances in memory design will likely have a significant impact on system performance, efficiency, and scalability.

SK hynix 192GB SOCAMM2: Key Specifications

| Capacity | 192GB |

| Memory Type | LPDDR5X |

| Process Node | 1cnm (6th-gen 10nm-class) |

| Bandwidth | More than 2× vs RDIMM |

| Power Efficiency | Over 75% improvement |

| Form Factor | SOCAMM2 |

| Target Platform | AI servers (e.g., NVIDIA Vera Rubin) |

Source: SK hynix